Welcome to our comprehensive guide on feed-forward neural networks. In the always-developing scene of AI consciousness, it is fundamental to figure out neural networks. Neural networks mirror the construction and capability of the human mind, permitting PCs to gain from information and pursue choices in a manner that looks like human points of view.

These organizations have turned into the foundation of different AI applications, going from picture acknowledgment to normal language handling. Be that as it may, among the plenty of neural network designs, feed-forward neural networks stand apart for their effortlessness and viability.

In this guide, we’ll dive profound into the complexities of feed-forward brain organizations, investigating their engineering, preparing cycles, and applications. Thus, we should leave this excursion to disentangle the secrets behind feed-forward neural networks and saddle their power in the domain of AI consciousness.

What is a Feed Forward Neural Network?

Welcome to our investigation of feed-forward neural networks, a principal part of current computerized reasoning frameworks. A feed-forward neural network, frequently just alluded to as a neural network, is a kind of counterfeit neural network where associations between hubs don’t frame cycles.

Definition and basic structure

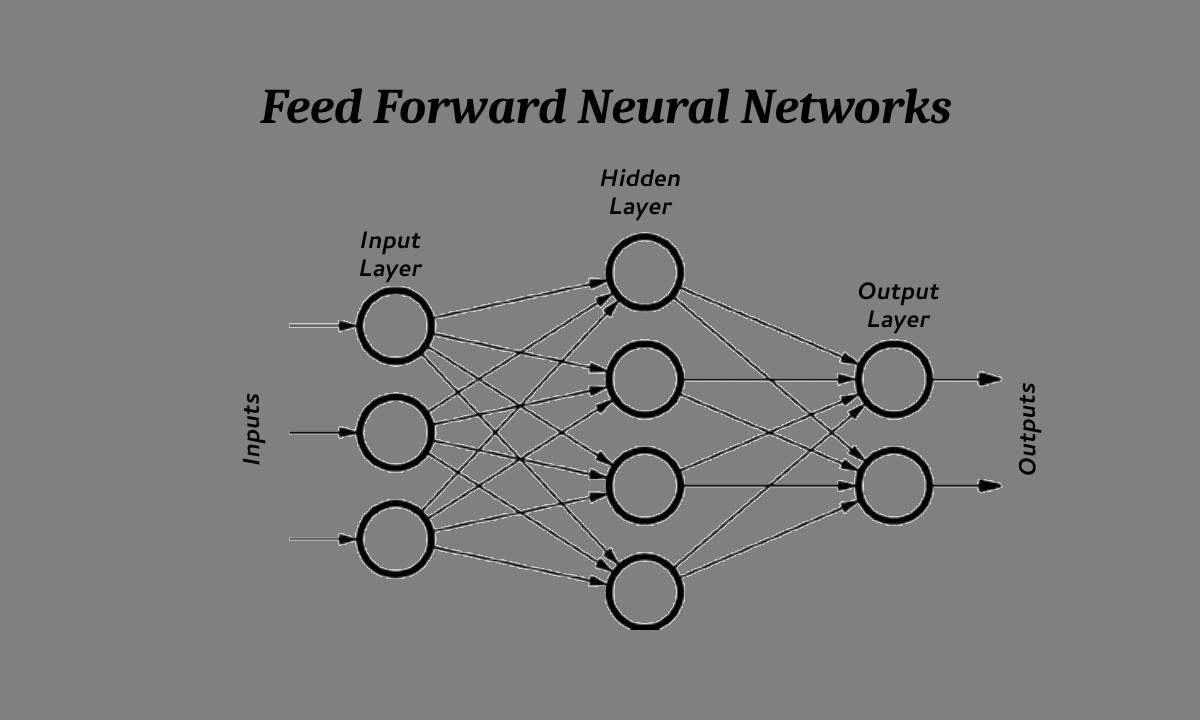

At its center, a feed-forward neural network comprises layers of interconnected hubs, or neurons, coordinated progressively. Each layer gets input from the past layer and passes its result to the following layer with practically no criticism circles. This one-directional progression of data from contribution to yield portrays the feed-forward nature of these networks.

Comparison with other types of neural networks

In contrast to recurrent neural networks (RNNs), which have criticism associations permitting them to display a dynamic transient way of behaving, feed-forward neural networks need such repetitive associations.

This qualification makes feed-forward networks especially appropriate for undertakings requiring static info yield mappings, like picture arrangement or relapse issues. While RNNs succeed in handling successive information, feed-forward networks succeed in undertakings where each info is free of past data sources.

Fundamentally, feed-forward neural networks offer direct engineering for handling information, settling on a famous decision in different AI applications. We should dig further into the parts and workings of these organizations to acquire complete comprehension of their capacities and impediments.

Read Also: Deep Learning vs Machine Learning: Key Differences

Components of a Feed Forward Neural Network

We should dive into the mind-boggling parts that comprise a feed-forward neural network, enlightening the inward functions of this strong computerized reasoning design.

Input layer

The info layer fills in as the door for information to enter the neural network. Every neuron in this layer addresses an element or quality of the information.

For example, in a picture acknowledgment task, every neuron could relate to a pixel esteem. The information layer just passes the info information to the ensuing layers, starting the progression of data through the organization.

Hidden layers

Settled between the information and result layers, the secret layers are where the wizardry of calculation happens. These layers comprise interconnected neurons, each performing weighted calculations on the information.

The number of secret layers and the number of neurons in each layer are pivotal plan boundaries that impact the organization’s capacity to gain complex examples from information.

Through progressive changes in the secret layers, the organization extricates more elevated level highlights from the information, working with the assignment’s explicit educational experience.

Output layer

At the zenith of the neural network lies the result layer, where the organization delivers its forecasts or groupings. The neurons in this layer address the potential results or classes of the job needing to be done.

For example, in a double-order issue, the result layer could contain two neurons, each showing the likelihood of having a place with one of the two classes. The resulting layer incorporates the data handled through the secret layers and creates the last result of the feed-forward neural network.

Understanding the particular jobs and communications of these parts is fundamental for getting a handle on the usefulness and capacities of feed-forward neural networks. Presently, how about we dig further into the enactment works that administer the way of behaving of individual neurons inside these layers?

See Also: How to Optimize Your Online Shop With Data Mining

Activation Functions

How about we unwind the critical job of enactment capabilities inside the domain of feed-forward neural networks, revealing insight into their importance in profoundly shaping the organization’s way of behaving and empowering complex calculations?

Purpose and types of activation functions

Activation functions act as the nonlinear changes applied to the weighted amount of contributions at every neuron, bringing nonlinearity into the organization and empowering it to learn complex connections in information. They assume a critical part in deciding the result of a neuron and, thus, the whole neural network.

Different kinds of initiation capabilities exist, each with remarkable properties and reasonableness for various assignments. Understanding the qualities and ramifications of these capabilities is indispensable for really planning and preparing feed-forward neural networks.

Common activation functions used in feed-forward networks

In the space of feed-forward neural networks, a few enactment capabilities have acquired conspicuousness because of their viability and computational proficiency. Among these, the sigmoid capability amended straight unit (ReLU), and exaggerated digression (tanh) capability are generally used.

Every enactment capability shows unmistakable properties, impacting the organization’s capacity to gain and sum up information. By investigating the qualities and ways of behaving of these normal initiation capabilities, we can acquire experiences into their effect on network execution and streamlining procedures.

Forward Propagation

We should dive into the pivotal course of forward spread inside the space of feed-forward neural networks, disentangling the system by which these organizations change input information into significant forecasts or arrangements.

Explanation of the forward propagation process

Forward propagation, otherwise called feed-forward pass, is the principal system through which input information crosses the neural network, layer by layer, eventually producing a result. The cycle starts with the information layer, where every neuron gets separate info values. These inputs are then weighted and summed, incorporating the learned parameters (weights) associated with each connection.

In this manner, the subsequent qualities are gone through the actuation capability of every neuron in the secret layers, bringing nonlinearity into the organization and empowering complex calculations. This consecutive progression of data goes on through the secret layers until arriving at the result layer, where the last expectations or arrangements are delivered.

Forward engendering typifies the pith of feed-forward neural networks, working with the interpretation of info information into noteworthy bits of knowledge through progressive changes across the organization’s layers.

Role of weights and biases

Fundamental to the forward spread process are the boundaries known as loads and predispositions, which administer the change of info information as it crosses the neural network. Loads address the strength of associations between neurons, directing the impact of info values on the actuation of resulting neurons.

Biases, on the other hand, serve as additional parameters that introduce flexibility and enable the network to learn complex patterns from data.

During forward propagation, the information is increased by the particular loads and added with inclinations at every neuron, molding the initiation levels and deciding the organization’s result.

By changing these boundaries through the method involved with preparing, feed-forward neural networks can successfully gain from information and adjust their way of behaving to accomplish wanted goals.

Training a Feed Forward Neural Network

We should dive into the unpredictable course of preparing a feed-forward neural network, opening the instruments by which these organizations gain from information, and adjusting their boundaries to accomplish ideal execution.

Overview of the training process

Preparing a feed-forward neural network includes iteratively introducing marked preparing information to the organization, and changing its boundaries to limit the distinction between anticipated and genuine results. This cycle means to advance the organization’s capacity to sum up from the preparation of information to concealed models, consequently improving its prescient precision. Through progressive cycles, the organization refines its inward portrayals, step by step working on its exhibition on the job that needs to be done.

Backpropagation algorithm

The backpropagation calculation is vital to preparing feed-forward neural networks, which empowers the effective calculation of angles for network boundaries. The calculation works by engendering mistakes in reverse through the organization, crediting them to individual neurons in light of their commitment to the general expectation blunder.

By iteratively changing loads and predispositions toward the path that limits the blunder, backpropagation works with the combination of the organization toward an ideal arrangement. This iterative course of forward and reverse passes enables the organization to gain complex examples from information and refine its inside portrayals over the long run.

Gradient descent optimization techniques

Angle plunge improvement methods supplement the backpropagation calculation by directing the organization’s boundary refreshes toward the course of the steepest plummet in the blunder scene.

These procedures, like stochastic gradient descent (SGD) and its variations, change the learning rate, update rules to speed up combinations, and forestall overshooting nearby minima.

By effectively exploring the high-layered boundary space, inclination drops enhancement strategies empower feed-forward neural networks to combine to a worldwide ideal arrangement, improving their prescient execution and speculation capacity.

Read Also: What is the Methodology of Deep Reinforcement Learning?

Applications of Feed Forward Neural Networks

Investigating the different scenes of uses, feed-forward neural networks arise as adaptable devices with inescapable utility across different spaces, from picture order to clinical finding. Their intrinsic capacity to deal with complex information and learn unpredictable examples makes them significant resources in the domain of AI.

Image classification

In the domain of PC vision, feed-forward neural networks have upset picture order errands by precisely sorting pictures into predefined classes.

Utilizing convolutional neural network (CNN) structures, feed-forward networks break down pixel-level highlights and progressive portrayals to recognize items, scenes, or examples inside pictures.

From independent vehicles to facial acknowledgment frameworks, feed-forward neural networks power plenty of picture-based applications, improving effectiveness and precision in visual acknowledgment errands.

Natural language processing

Feed-forward neural networks find broad applications in natural language processing (NLP), where they succeed in errands like opinion examination, text characterization, and named substance acknowledgment.

Through recurrent neural networks (RNN) or transformer designs, feed-forward networks process consecutive information, extricating semantic significance and context-oriented data from text.

Their versatility to etymological subtleties and capacity to catch long-range conditions make them key apparatuses in creating hearty NLP applications, going from chatbots to language interpretation frameworks.

Financial forecasting

In the domain of money, feed-forward neural networks assume a significant part in foreseeing market patterns, stock costs, and monetary gamble evaluations.

By breaking down authentic information and financial pointers, feed-forward networks master hidden examples and relationships, empowering exact figures and informed direction.

Their capacity to deal with nonlinear connections and adjust to developing economic situations makes them fundamental devices for monetary experts, brokers, and trading companies trying to acquire an upper hand in the unique scene of money.

Read Also: AI in Commodity Market Trends: From Prediction to Reality

Medical diagnosis

In the field of medical care, feed-forward neural networks offer promising roads for illness finding, guessing, and therapy arranging. By dissecting clinical imaging information, electronic wellbeing records, and genomic successions, feed-forward networks help in distinguishing designs demonstrative of different sicknesses and conditions.

From identifying peculiarities in clinical pictures to foreseeing patient results, these organizations engage medical services experts with significant bits of knowledge and choice help apparatuses, at last working on understanding consideration and therapy results.

Challenges and Limitations

Exploring the landscape of feed-forward neural networks reveals a heap of difficulties and restrictions that should be addressed to release their maximum capacity in taking care of mind-boggling issues across different spaces.

Understanding these obstacles is fundamental for contriving viable procedures to moderate their effect and encourage proceed with progressions in computerized reasoning.

Overfitting

One of the essential difficulties faced by feed-forward neural networks is the risk of overfitting, wherein the model catches commotion and insignificant examples from the preparation information, prompting unfortunate speculation on inconspicuous models.

Overfitting happens when the organization turns out to be excessively mind-boggling compared with the accessible information, bringing about remembrance as opposed to learning.

Systems like regularization methods, information expansion, and early halting are utilized to battle overfitting and urge the organization to gain significant portrayals from the information.

Vanishing and exploding gradients

Another challenge inherent to training feed-forward neural networks is the issue of vanishing and exploding gradients, wherein slopes either lessen dramatically or develop wildly as they spread in reverse through the organization during preparation.

This peculiarity can block the assembly of the organization and thwart its capacity to learn. Strategies, for example, angle cutting, cautious weight statement, and initiation work that relieve inclination immersion are utilized to address this test and balance out the preparation interaction.

Computational complexity

Feed-forward neural networks often grapple with computational complexity, particularly as the size and depth of the network increase. The sheer volume of boundaries and calculations associated with preparing huge-scope organizations can strain computational assets and obstruct ongoing surmising in pragmatic applications.

Methods like model pruning, quantization, and parallelization are utilized to diminish computational intricacy and upgrade the proficiency of feed-forward neural networks, empowering their sending in asset-obliged conditions.

Conclusion

In conclusion, All in all, this exhaustive guide has given a profound jump into the complexities of feed-forward neural networks, revealing insight into their engineering, preparing cycles, applications, and difficulties.

From understanding the parts of feed-forward organizations to investigating their different applications across spaces, for example, picture arrangement, normal language handling, monetary determining, and clinical analysis, we’ve revealed the significant effect these organizations have on the field of man-made brainpower.

Feed-forward neural networks act as central support points in the improvement of shrewd frameworks, driving development and cultivating headways in different businesses. As we keep on unwinding the secrets of feed-forward organizations and pushing the limits of man-made brainpower, we welcome you to share your considerations and encounters in the remarks below.

Remember to spread the information by imparting this astounding data to your companions and partners. Together, we should leave on the excursion towards opening the maximum capacity of feed-forward neural networks and molding the eventual fate of artificial intelligence.